As cameras and sensors explore computational photography, we’ll see sensor technology change in exciting new ways to support what’s now possible with faster, real-time processing.

One such technology is the Quad Bayer Sensor that Sony has developed and can already be found in the Mavic Air II. They have also developed a 48MP full-frame version, which we now see in the Sony A7S III and the Sony FX3.

The Quad Bayer sensor is a pretty cool advancement in sensor technology, and it has the potential to be a true game-changer for our hybrid photo/video systems.

What Is A Quad Bayer Sensor?

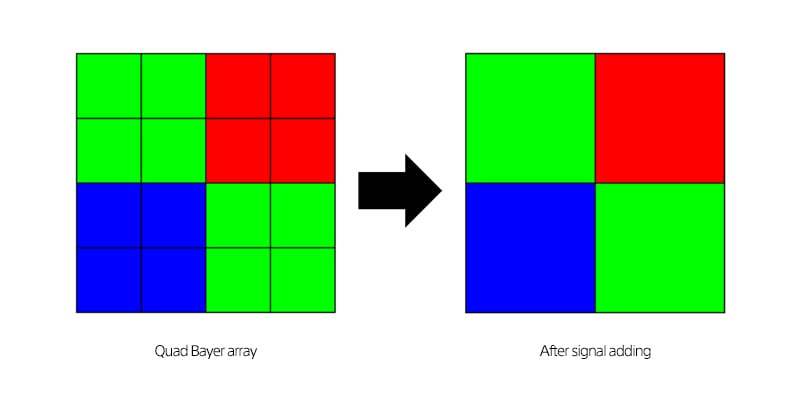

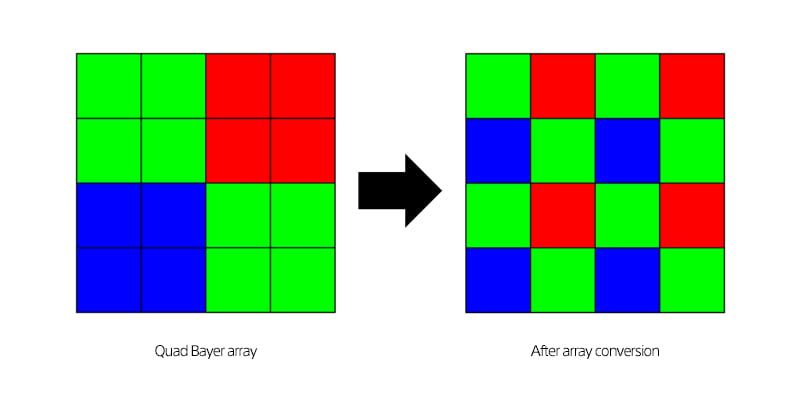

It’s a sensor with each photosite divided into four without offsetting the RGB filters.

This means that each color section can function in two ways.

- As a low-megapixel sensor, the quad sensor sites act as a single pixel to perform better when photon coherency is much lower, such as in low-light situations. This improves sensitivity and efficiency.

- With a high-megapixel sensor, each photosite pulls its color data, which is useful in high-photon-coherency situations like direct sunlight, where photon coherency is high.

Applications For Photography

The Quad Bayer sensor is not necessarily that useful for still photography, where there is no reason not to always capture with the higher resolution and then scale the image for better low-light performance.

This was a common misconception about the A7S II vs. the A7R II. People would assume the A7sII was better in low light for stills; however, when scaling the image from an A7rII, the results were the same.

Generally, if the processing power is good enough to scale in-camera and on the fly, there is not really much downside to using higher-resolution sensors in low light.

We even see cameras like the Leica M11 that let you select different resolutions by binning pixels, which actually improves dynamic range.

Applications For Videography

The Quad Bayer sensor is technically a high-resolution, high-pixel-pitch sensor, but with intelligent analog-to-digital conversion, it can play two different roles.

- Superior 4k low-light performance

- A high-pixel pitch sensor that can produce 8k images

For video, a quad Bayer sensor can be very useful because it lets you adjust your resolution based on your lighting conditions. If you are in full bright light, you could capture 8k by using every single photosite as its own, or in low light; you can combine each color quad section for better low-light shooting.

Why Do You Need Bigger Photosites For Low Light?

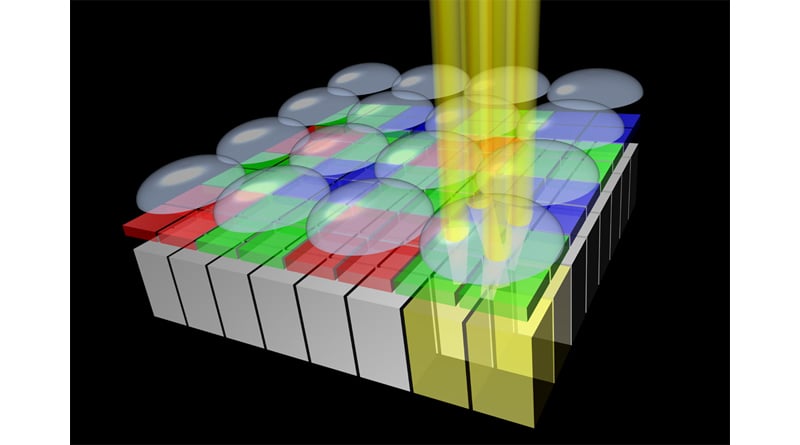

You need larger photosites for low light because, as light decreases, the density of photons decreases as well. A larger photosite allows the surface to capture a signal from a larger area, maintaining an acceptable voltage.

In daylight, there are 11,400 photons in a cubic millimeter. Energy from the sun is 3.10 electron volts, with the most energetic light being purple. The orange light is 2.06eV. If you decrease the coherence of the photons in low light, you need a much bigger surface to capture the same amount of energy since the density of photons has decreased.

I’m not about to do the math on photon coherency and ISO, but I think you understand. Cut the light by 1/64, and you’ll need a much larger surface area to absorb the same energy. Light has a finite resolution in the form of how much energy it can create when interacting with electrons.

This is why there is little point in buying a high-megapixel camera if you mostly shoot in low light with fast shutters. The coherency and energy of the available light still limit you. More pixels in a smaller configuration are less efficient at capturing this energy. Having a Quad Bayer sensor gives you the best of both worlds, as long as no strange artifacts come from having a 2×2 configuration for each color pixel array.

I’ve been trying to figure out for some time what Sony could do that would be game-changing for the A7sIII. I think this is it.

The Potentials Of Quad Bayer Sensor In A Future Camera Like The Sony A7sIII

The whole life and soul of the A7sII was low-light performance. A Quad Bayer sensor will allow the camera to maintain its amazing 4 K low-light performance by combining the quad configuration data into a single pixel. Still, it would also allow the camera to produce an 8k image in well-lit situations by using the 1×1-pixel data.

What else is cool about shooting 8k is that you can shoot at a lower subsampling, like 4:2:0, and increase it in post-production by scaling down. So 8k is not useless. You can always pull a better image by scaling down a larger one. We see cameras like the Canon R5 and the Nikon Z8 doing this, pulling 8 K data for their 4 K video modes.

A Quad Bayer sensor would make the Sony A7sIII an incredibly versatile tool with insane capabilities unmatched by the competition.

Update: The Sony A7sIII is a quad-Bayer sensor derived from a 48MP sensor. The camera takes all that information and combines it to produce amazing video results, but unfortunately, you can never shoot in 48MP mode; it’s locked to 4K only.

Leave a Reply